Designing for the moment your website actually gets attention

There is a very predictable pattern every time a founder walks into Dragon's Den. The pitch lands, thousands of people reach for their phones to Google the business and within minutes the site is either crawling or completely gone. It gets blamed on 'too much traffic', as if success itself broke the thing, when in reality the infrastructure was probably only ever designed for a quiet Tuesday afternoon.

Lately I've noticed more and more sites that just feel... tired. Slow to respond, occasionally throwing a 502, sometimes fine, sometimes not. In many cases it is not a dramatic failure, just a system running close to the edge because it was never designed with peaks in mind, or because it is sharing infrastructure with who-knows-what, or because it is quietly serving a steady stream of bot traffic that nobody has really taken seriously.

High availability is not about ticking a box that says 'multi-az enabled'. It is about stepping back and asking how this thing behaves when real life happens.

Start with the business model, not the instance size

Before we talk about ECS clusters or Lambda concurrency, we talk about the business. When does traffic spike? Is it predictable, like race weekends or product launches, or is it chaotic and campaign-driven? Does traffic double on a Friday afternoon newsletter, or is it a steady hum all week and then madness once a month?

Designing around the average is usually the mistake. Systems do not fail on average days. They fail on peak days, and peak days are often entirely foreseeable if you look at the commercial model rather than the CPU graph.

If you know that 80 percent of your revenue lands in a tight window, then your infrastructure should reflect that. Autoscaling policies can be tuned around known patterns, capacity can be biased toward expected surges, and testing can focus on the moments that matter rather than some artificial steady state that never really happens.

Elasticity is powerful, but only if the app allows it

On Amazon Web Services, scaling out is mechanically straightforward. ECS will happily run more tasks, whether on EC2 or Fargate. Lambda will spin up additional executions at an impressive rate. The cloud is not usually the bottleneck.

The application often is.

If session state is tied to a single container, horizontal scaling becomes awkward. If health checks always return 200 even when the database is on fire, the load balancer has no idea anything is wrong. If the database connection pool is undersized, doubling your task count may simply move the bottleneck from CPU to connection exhaustion.

With Lambda, concurrency can scale rapidly, but downstream systems still have limits. A burst of invocations hitting a relational database with tight connection caps can cause more harm than good. In those cases we tend to introduce queues, limit concurrency deliberately, or move expensive work out of the request path altogether.

Elasticity works best when the application is stateless, decoupled and designed to share load cleanly across multiple instances and availability zones.

High availability is not about ticking a box that says 'multi-az enabled'. It is about stepping back and asking how this thing behaves when real life happens.

Unwanted traffic is still traffic

One of the more sobering realisations for many clients is how much of their traffic is not customers at all. It is bots scraping content, automated scanners wandering through random URLs looking for something exploitable, scripts poking at old WordPress paths on a stack that has never seen WordPress, and the occasional overenthusiastic client retrying failed requests in tight loops until something gives.

Left unchecked, your application will serve all of it quite happily.

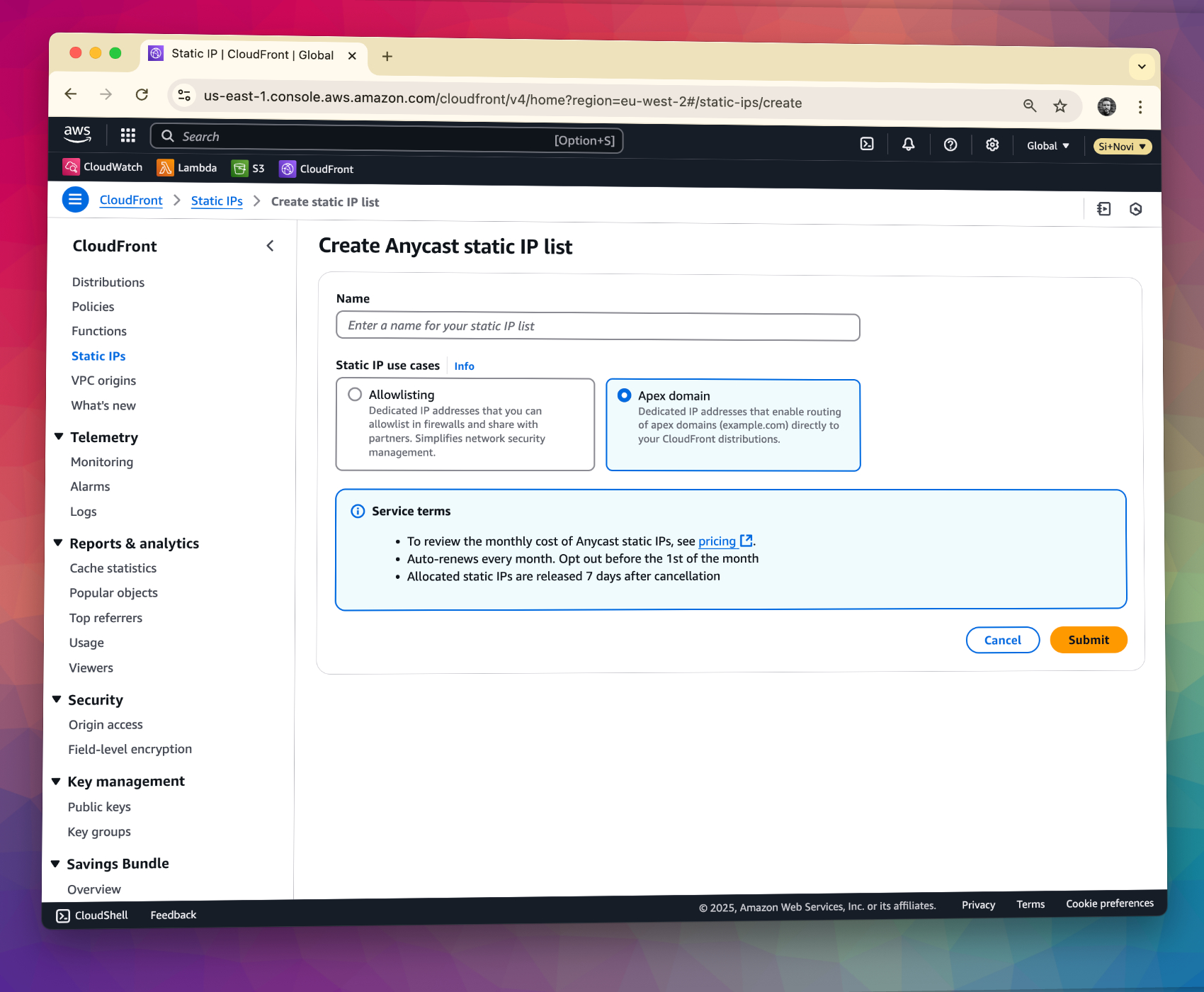

This is where WAF and CloudFront earn their keep. Managed rule sets take care of a lot of commodity attack patterns. Rate-based rules allow you to say, calmly and automatically, that 400 requests per minute to a login endpoint from a single IP is not normal behaviour. Bot control lets you distinguish between genuine search engine crawlers and suspicious automation so that you can treat them differently rather than blocking blindly.

We often add pragmatic rules around excessive 404 responses as well. If a client is requesting dozens of non-existent paths in quick succession, that is rarely someone browsing politely. Blocking that at the edge means the request never reaches your containers, never opens a database connection, never consumes application CPU. It is a small decision that adds up quickly.

High availability is partly about capacity, but it is also about refusing to waste it.

Build for scale by offloading aggressively

A common anti-pattern is letting the application server do everything. Serving large images, streaming downloads, rendering identical responses repeatedly for anonymous users, performing heavy computations inline with web requests. It works when traffic is low, then creaks under load.

Static assets belong in S3, fronted by CloudFront. That single move can remove a surprising amount of pressure from the origin. Assets are cached at the edge, delivered closer to users and scaled automatically. Your containers can then focus on actual application logic rather than acting as a file server.

Background processing should be decoupled from user-facing requests. Queues absorb spikes. Workers process jobs at a controlled rate. Managed services such as RDS, ElastiCache and S3 are designed to scale in ways that a single VM simply is not.

The more work you can push to services that are effectively 'limitless' from your perspective, the more headroom your core application retains when traffic surges.

Caching is where performance is won or lost

Caching tends to be either ignored or overused.

At the edge, CloudFront can cache not just assets but API responses and even full HTML pages. For content that does not change per user, this can reduce origin load dramatically. But cache keys need care. Forget to vary by query string or header and you may serve the wrong thing. Cache personalised content and you create problems that are far worse than a slow page.

For content-heavy sites, short-lived HTML caching for anonymous users can transform performance. A page that would normally execute several database queries and render templates becomes a simple cache lookup for the majority of traffic. Multiply that by thousands of requests and the impact is obvious.

At the application layer, Redis is invaluable for session storage and frequently accessed lookups. It reduces database pressure and smooths out spikes. But cache invalidation still needs thought. Expiry times, write-through strategies and fallback behaviour when the cache is cold all matter. Cache should accelerate a well-behaved system, not hide an inefficient one.

If your website collapses the moment it receives attention, that is not success exposing a flaw in the cloud. It is a design that never expected to succeed.

Resilience across availability zones

Single availability zone deployments are cheaper and simpler, right up until they are not.

We generally deploy across at least two zones, with load balancers distributing traffic and replacing unhealthy targets automatically. Databases are configured for multi-az where the business case justifies it, so that failover is automatic rather than a panicked manual exercise.

The aim is not perfection, it is resilience. When something degrades, traffic shifts. When an instance fails, another takes its place. Users ideally do not notice.

In summary

High availability is not magic and it is not a single feature toggle. It is the accumulation of sensible, sometimes unglamorous decisions:

- Understand real traffic patterns and design for peaks, not averages

- Scale horizontally with proper guardrails around databases and downstream systems

- Filter and rate limit unwanted traffic at the edge

- Offload static and heavy work to services built to scale

- Use caching carefully and deliberately

- Monitor the system so you can see problems before users do

If your website collapses the moment it receives attention, that is not success exposing a flaw in the cloud. It is a design that never expected to succeed.

When done properly, a traffic spike should feel almost boring. Instances scale out, caches warm up, unwanted traffic is filtered quietly at the edge and users simply carry on. That kind of boring is exactly what you want.